M365 is a true catalyst for collaborative work, having to respond to the increase in internal threats that result.

The importance of the M365 suite in business

The Microsoft 365 software suite offers a critical set of collaborative services for businesses (Figure 1). These collaborative services handling a large volume of potentially sensitive data need to be secured, thanks to tools. Microsoft has therefore made available a range of security products, to reduce these risks.

Figure 1 – The features of the M365 suite.

Internal threats are often forgotten but increasingly present

The M365 tenants, like any computer system, obviously represent a potential target for external attackers. However, the internal threat should not be underestimated, especially since the proportion and impact of the latter is not negligible. Indeed, in 2020 in North America, nearly 19%[1] of threat actors come from inside the company. Different categories of insider threats can be distinguished:

- Sabotage designates an internal employee using legitimate access to damage or destroy company systems or data in order to harm the company;

- Fraud, represented by the modification or destruction of data by an insider for personal gain;

- Data theft where the insider steals the company’s intellectual property in order to resell it or keep it for himself or for an upcoming job. The insider may also steal information for another organization (competitors or governments for example), for the purpose of carrying out industrial or government espionage;

- Clumsiness that comes from mistakes or unintentional actions performed by a negligent employee.

These threats are also associated with potential actors:

- Malicious employees with the aim of carrying out acts of sabotage (e.g. modification or deletion of data).

- Employees leaving a company, especially if they leave it forcibly. In this case, the biggest associated threat is data theft. According to a study[2], 70% of employees say they take with them the work they have produced for the company, even though it does not belong to them.

- The internal agent who is a person working for an external group to allow them to access company resources. These people may have been subjected to methods of corruption or even blackmail.

- Disobedient people who circumvent company’s security policies, for example by using personal online data storage solutions, creating a risk of data leakage.

- External workers who are not employees but who have access to the company’s information system (service providers, suppliers, partners, etc.).

- Careless workers, who are not aware that their actions lead to vulnerabilities for the company. Indeed, in most cases, security breaches involving an employee are not intentional, but come from negligent workers (in 56% of cases in 2021[3]). For example, an employee may lose or have an unencrypted device with sensitive data stolen that could put the business at risk. Or just share files to the wrong people or delete important items without realizing it.

Microsoft’s response to these insider threats

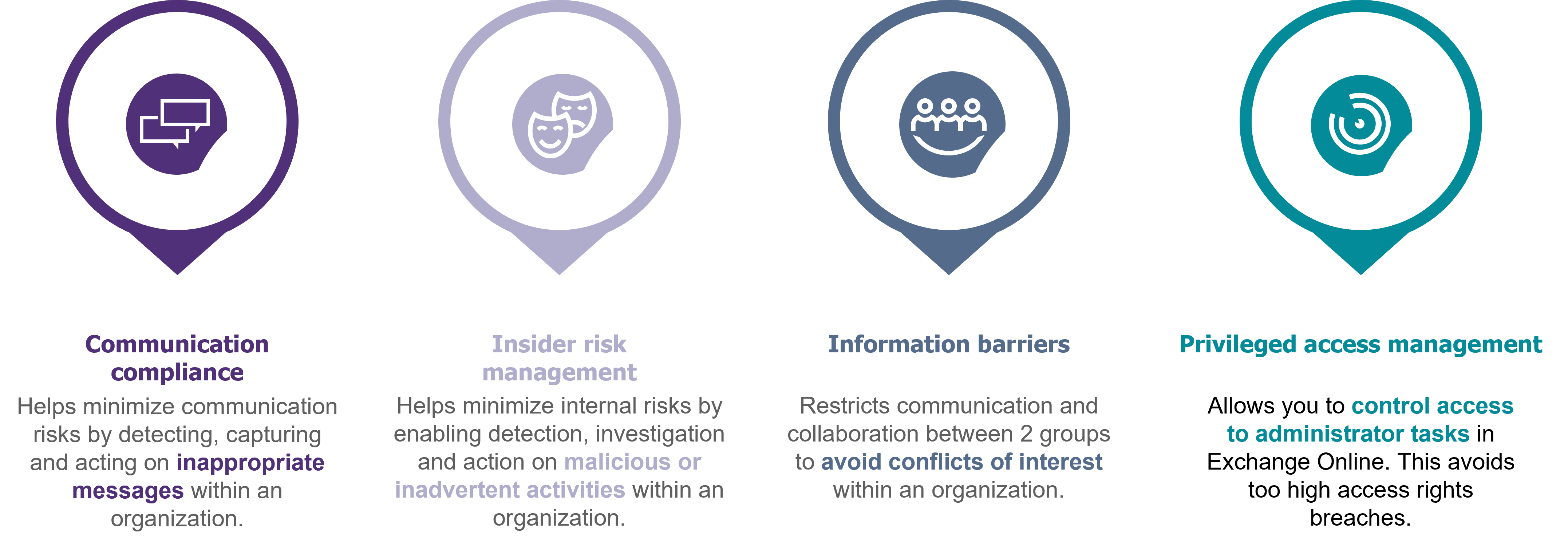

One of Microsoft’s challenges today is to help its customers protect themselves against internal risks. Currently, Microsoft offers a group of solutions to combat insider threats called: “Microsoft Purview“, formerly known as “compliance center” (see Figure 2[4]).

This group includes

- “Communication compliance“: minimizing communication risks by making it possible to detect, capture and act on risky messages within an organization;

- “Information barriers“: restrict communication and collaboration between 2 groups to avoid internal conflicts of interest;

- “Privileged access management“: control access to administrator tasks in Exchange Online to avoid access rights that are too high.

Finally, Microsoft Purview is also newly composed of the “Insider Risk Management” (IRM) module. This module helps minimize internal risks by detecting, investigating and acting on malicious or unintentional activities within an organization.

Figure 2 – Microsoft’s insider threat management modules.

Insider Risk Management, the Microsoft solution that helps organizations address some of these insider threats.

As explained earlier, IRM helps minimize internal risks. Concretely, the tool works in different phases (which will be detailed later) and is based on proven data from Microsoft workflows. It has pre-established data leakage scenarios such as an employee’s resignation or dissatisfaction. These scenarios facilitate the analysis of risky activities by providing context. The tool will be able to use metadata related to the targeted scenario, such as the dates of departure or annual maintenance of an employee for example. Thus, it will be able to assess the level of risk of users and generate alerts at the appropriate time.

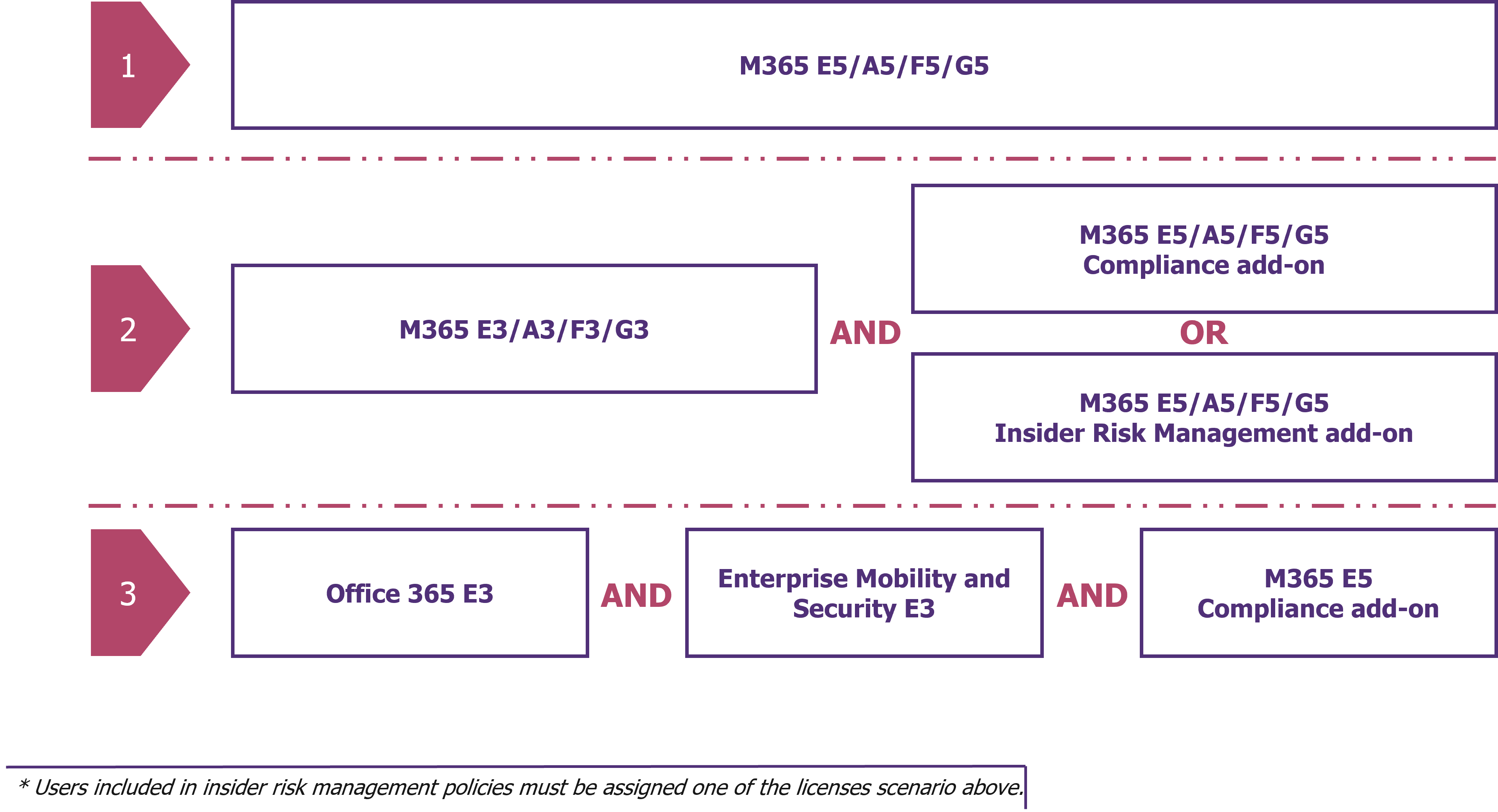

For this, Insider Risk Management uses different modules of M365. IRM is an advanced solution and therefore requires specific licenses. To be able to use this module, there are several licensing possibilities:

Figure 3 – Three ways to get Insider Risk Management with Microsoft licenses.

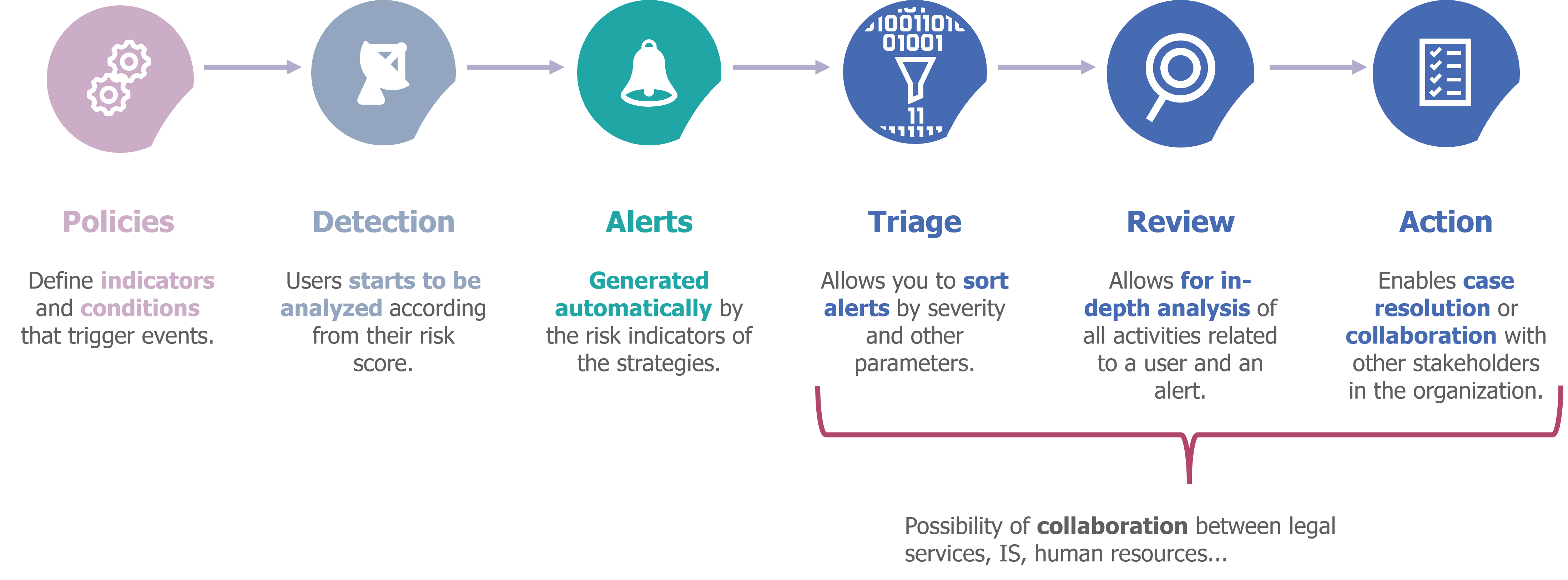

A tool that works in 6 phases

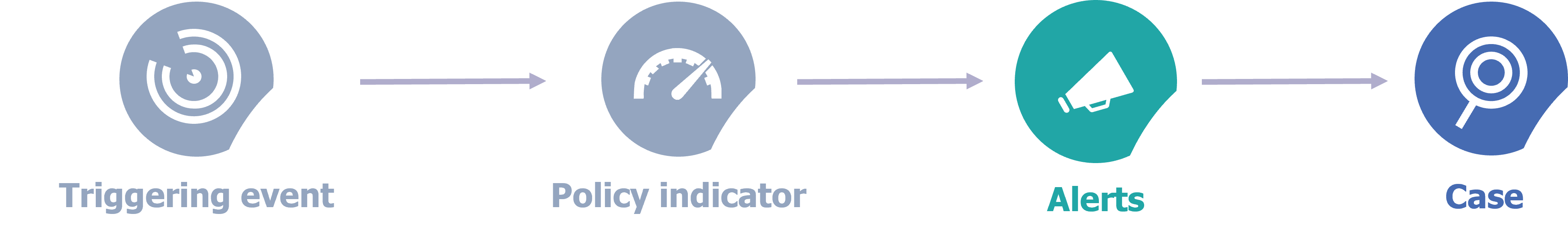

The first is the strategy creation phase, which defines the triggering events and risk indicators leading to the generation of alerts.

The second is detection, when a user’s activities begin to be analyzed by IRM as a result of suspicious activity (triggering event).

The third is a phase of generation of alerts, they are automatically generated by the risk indicators defined in the strategies.

Once an alert is lifted, IRM provides a triage step that allows administrators to classify alerts based on severity and other parameters.

Then comes the inspection phase which allows to analyze in depth all the activities related to a user and an alert thanks to the creation of a deep analysis file (“case”).

Once the alert has been processed, the action phase intervenes. It consists of resolving the analysis case, either by alerting the user to unusual behavior, or by alerting the organization’s stakeholders (legal, IS, human resources, etc.) who can take appropriate action.

Figure 4 – The 6 phases of IRM operation.

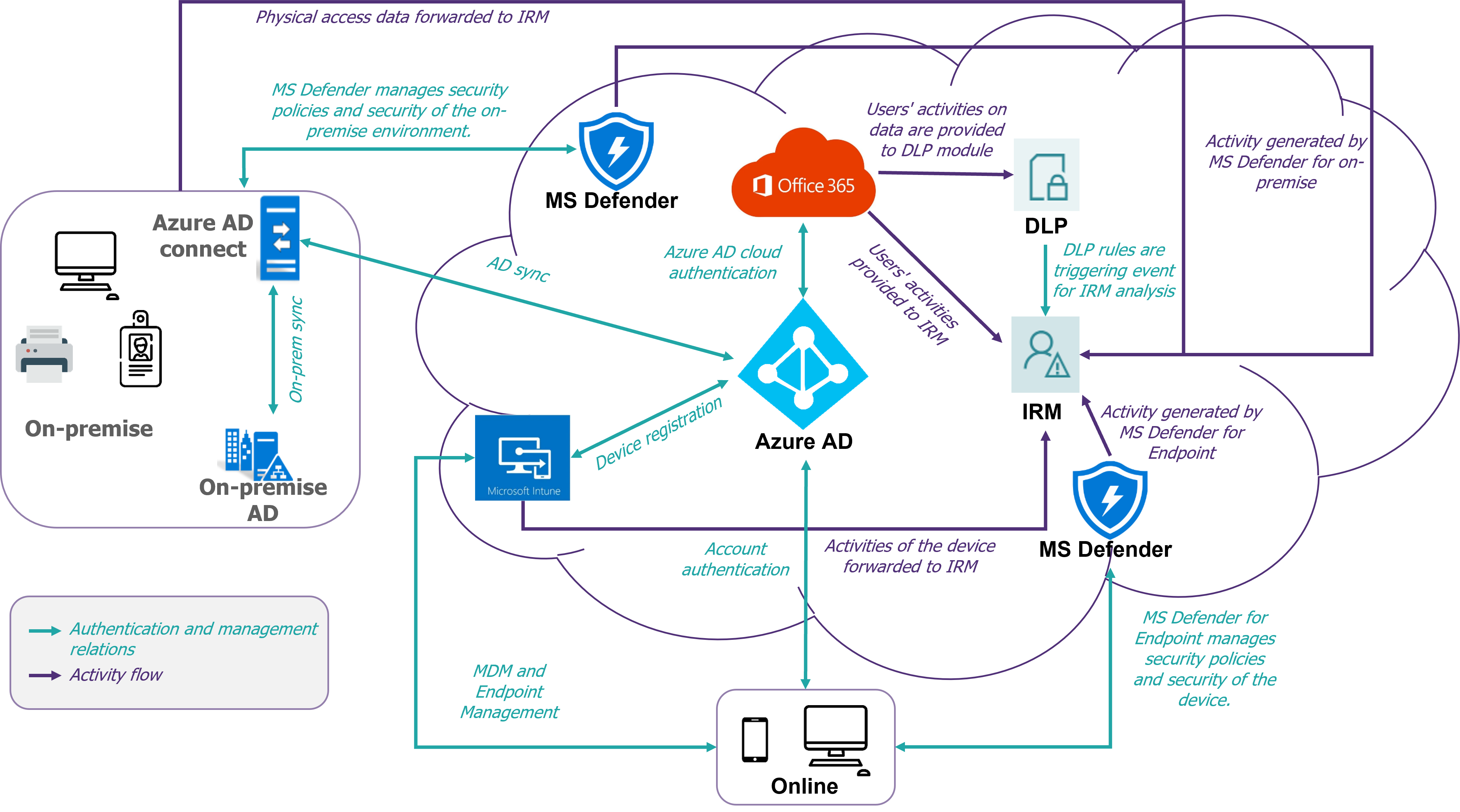

To work, Insider Risk Management fully integrates with the M365 components of the tenant on which it is deployed (see diagram in Figure 5). Indeed, the data received from other modules allows the analysis of workflows and different activities.

Figure 5 – Overall IRM architecture diagram

To begin, define detection strategies

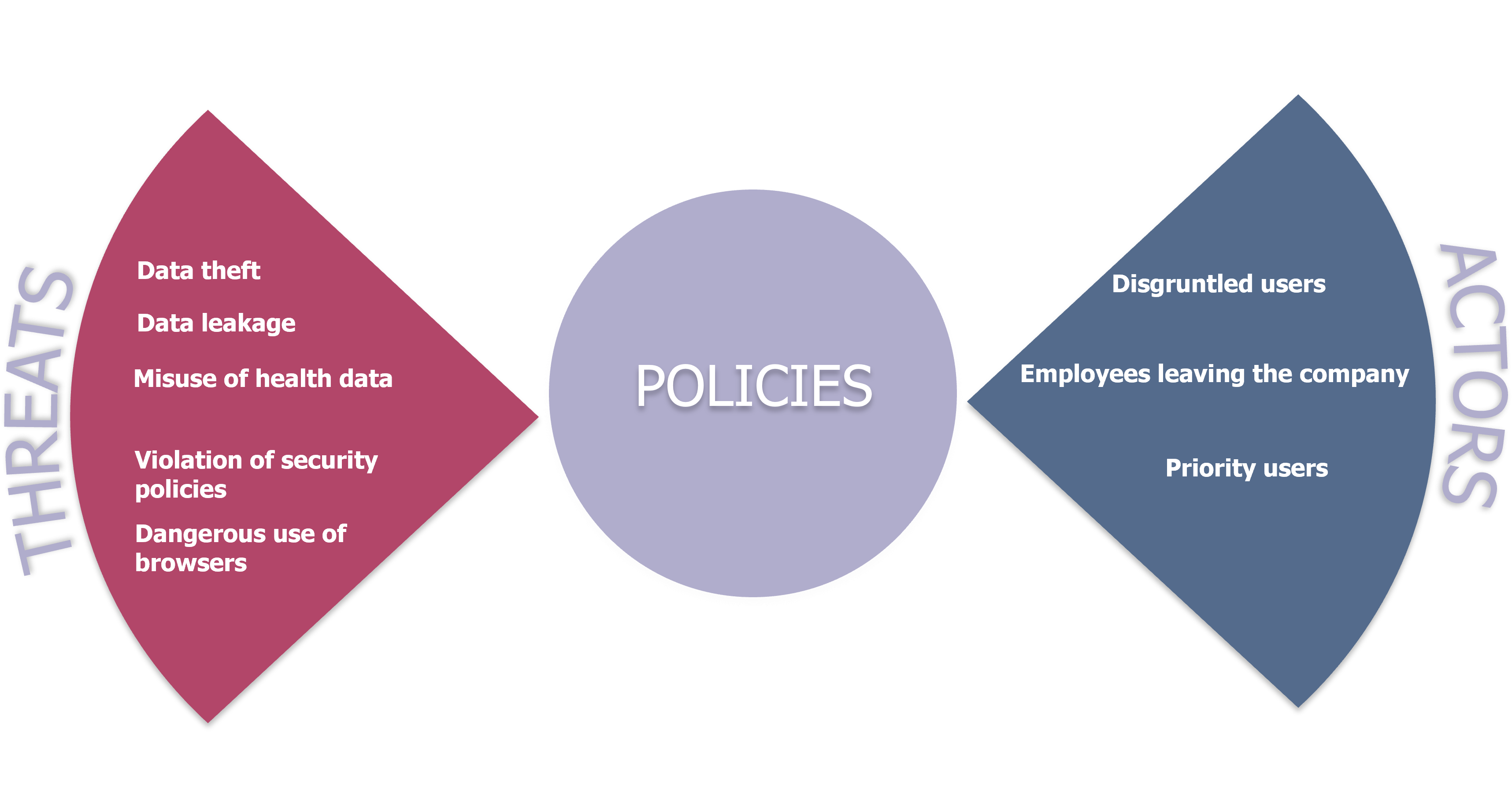

As presented above, the first step is the definition of strategies, which are based on one of the 5 scenarios established by Microsoft:

- Data theft: Combating the theft of company data for the purpose of profiting or personal interest. This scenario applies to users leaving the company (voluntarily or not).

- Data leakage: Fight against the intentional or unintentional sharing of sensitive information.

- Misuse of health data: Combatting the illegal exploitation of health information by employees.

- Violation of security policies: Combating the installation of malware and the uninstallation or disabling of certain services.

- Dangerous use of browsers: Detects browsing behavior that may not be acceptable by the company’s charter (visiting sites that incite hatred, with adult content) or present a threat (phishing sites).

These scenarios are available as templates to feed strategies and can include any type of user in an organization, but IRM allows for more precision and more meaning and context by targeting specific categories of users. Here are the 3 types of actors offered by Microsoft:

- Disgruntled users: Employee’s behavior can be influenced by many events such as performance evaluation or organizational changes (including “demotion” in the organization). To do this, IRM allows you to import data related to performance and organization.

- Employees leaving the company: An employee can change companies or be fired and therefore become a threat to the organization they worked for.

- Priority users: Users with privileged access or with high-risk responsibilities.

To detect these cases, IRM allows you to import data from HR tools (evaluation, organization, resignations, dismissal), and data related to user authorizations.

Figure 6 – Components of an Internal Risk Management Strategy

The definition of detection strategies (scenarios and actors) allows you to configure the associated list of triggering.

If we take as an example, the “data leak” scenario, it includes a set of indicators and triggering events to prevent accidental and intentional data leaks. But depending on the users targeted by this strategy, the indicators and triggering events will be different (see table below). In this example, the policy can apply to all users, to priority users (for example, a group of users working on sensitive data), or to disgruntled users (for example, a focus on users who have been denied their promotion). The detection mechanism and the importance of indicators and triggering events specific to the selected user profiles are detailed in the rest of this article.

|

|

All users |

Priority users |

Disgruntled users |

|

Triggering events |

|

|

|

|

Indicators |

|

|

|

Next, detect suspicious activities

Once the policy creation phase is complete, the detection phase (Figure 7) is used to generate alerts. This step is the most important for detecting malicious behavior. It should be noted that without a triggering event present in an internal risk management strategy, user activities are not analyzed by IRM. The triggering events are related to the chosen detection scenario. As said before, this can be a resignation date or massive exfiltration activities (printing, downloading, copying to USB, sending email, etc.) or deletion. Triggering events can also be a sequence of actions, such as when a file is downloaded, then exfiltrated and finally deleted.

Figure 7 – Focus on the detection process.

After a user performs a triggering event, he become the target of the associated detection policy. From then on, the activities of the users defined in this strategy by the risk indicators are analyzed. Risk indicators can be indicators related to Office activities (manipulating files on SharePoint, OneDrive, Teams …), activities on devices (printing, renaming, creating hidden files, using USB keys, installing software…), browsing activities (accessing malicious sites, dangerous content…) and activities of other cloud applications (thanks to Microsoft Defender for Cloud Apps). If one of these indicators exceeds a certain threshold (defined via the policy), then an alert is generated and if the alert is confirmed by an IRM administrator as not a false positive, a case is opened to be able to analyze in detail the activities of the targeted user.

Finally, process the generated alerts

When a threat is confirmed and an in-depth scan file has been opened, IRM and global admins can then observe the content that has been downloaded, shared, printed, viewed, etc. This then allows stake holders to decide on the action to be taken in the face of the threat. We can either send a notification to the user concerned or escalate the case for investigation. However, it is important to remember that Insider Risk Management, does not allow to restrict the actions of a malicious user, it remains a tool of alert and inspection facilitating decision-making.

IRM is a powerful and promising solution but is not yet sufficiently mature

While Insider Risk Management requires a good understanding of all M365 services and Azure AD, it leverages the capabilities of security services to provide a better protection against insider threats. As described earlier, Insider Risk Management is a very effective tool, which analyzes all workflows and easily adapts to the activities of companies and users.

However, some points remain to be clarified and improved. Indeed, the effectiveness of IRM is contrasted by its rather high reaction time (about 12 hours to detect activities) and its interface which is not intuitive enough. Also, Microsoft documentation can be complicated to understand or even false in some cases (wrong date format for HR data for example). In addition, in the current situation, the scenarios presented could be monitored by a company’s SOC teams (via specific scripts, or alerts for example). Therefore, the tool is still less used by companies. Nevertheless, the evolution of the maturity of this tool needs to be carefully monitored, as regular changes are made (such as the addition of new detection scenarios).

In conclusion, what questions should be asked at the outset?

Define the concrete use cases to be covered and evaluate the added value compared to existing alerting (within the SOC).

Evaluate the impact of this tool on personal data, given its operating power.

Think about the organization to implement (responsibilities, alert handling process, strategy evolution process).

[1] Source: Verizon’s 2021 Data Breach Investigations Report (link).

[2] Source: Article “What happens to your data when a departing employee leaves? » on S2|DATA (link).

[3] Source: 2022 Cost of Insider Threats Global Report from Ponemon Institute (link).

[4] Based on Microsoft documentation for the Insider Risk Management product.