Following the GDPR’s adoption in 2016, most companies are taking a structured approach to compliance and, now that the May 2018 deadline is over, most of them enacted their compliance plan. But we are seeing some interpretations of the regulation that could be considered inaccurate. Therefore, we’re publishing a series of three articles aimed at dismantling these misconceptions. After a first article on whether consent is obligatory, this second article covers anonymization.

Misconception #2 — GDPR means that data must be anonymized

People often confuse anonymization, pseudonymization, and encryption. Aside from the fact that these techniques are very different, and are used in very different scenarios, none of them is mandatory under the GDPR. Some, however, are strongly recommended.

Anonymization enables data to be removed from the GDPR’s scope

Personal data means data associated with an identifiable, person.

Data is considered anonymous when the person concerned is no longer identifiable by any means whatsoever and , i.e. no data or data set can be traced back to the person’s identity. The data, then, is no longer personal data.

Pseudonymization and encryption are not the same as anonymization: see below.

Anonymization is not required by RGPD

In the whole of the GDPR, anonymization is mentioned only in a recital. This says that anonymous data, , is not subject to the regulation.[i]

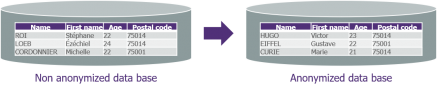

Figure 1 / An example of anonymization using four columns, where the following techniques are applied: substitution on the first and last names, blurring (random variations that retain the overall average) on age, and shuffling on the postal codes. Having done this, the data can still be used for application testing (because its business significance is intact) and for certain statistical analyses (because the distribution has been maintained).

Anonymizing data, in GDPR terms, is as effective as erasing it.

However, while erasure may involve one attribute only (for example, a person who exercises their right to have their email address erased), anonymization has to cover all recorded data, i.e. all data that could allow a person to be identified. Indeed, it makes no sense to anonymize certain attributes (for example, first and last name) of a record while leaving the others unmodified (for example, email address, postal address, etc.).

To note: anonymization can never be considered absolute or 100% guaranteed! In particular, the residual re-identification risk must be taken into account because, by applying a more detailed analysis, an attacker may be able to determine a person’s identity with a high degree of probability. This risk will always exist and must be quantified. [ii]

Anonymization is efficient for precise use cases

As anonymization is, by definition, irreversible, it cannot generally be applied to production data used by business processes, because these would then be unusable. Therefore, what justifies anonymization rather than erasure of data is when it is to be used for:

- statistical analysis (for example, for marketing purposes);

- tests carried out during the software development cycle (in non-production environments: unit tests, integration, qualification, and acceptance testing).

Another use case is where a system does not allow data to be physically deleted. To erase it in this case, logical deletion and data destruction should be carried out, i.e. the application of a basic anonymization technique that consists of replacing the data with values that have no meaning (for example, replacing alphanumeric values by Xs and numbers by 0s or 9s). Of course, this technique makes statistical analysis or functional tests impossible.

Anonymization techniques are very varied and depend on the type of data and its intended use. There are numerous tools available in this maturing market.

Pseudonymization: an effective means of protection

In summary, pseudonymization consists of reversibly splitting people’s identifying data, using non-explicit pseudonyms (for example, random character strings) as the means of effecting the correspondence. This limits exposure to non-identifying, or quasi-identifying, data.

But pseudonymizing is not anonymizing! Indeed:

- it is reversible: you can find a person’s identity by using the correspondence table;

- data that is left unmodified can be quasi-identifying, which means that more detailed analysis could be used to achieve identification.

A technology mature for specific sectors, promoted by GDPR

Pseudonymization itself is not mandatory, but it is considered a valid technique for securing data—and securing data is mandatory! [iii] , [iv]

Pseudonymization, as well as its variants such as tokenization and hashing, are generally implemented in production to mask personal data as a function of the user’s role; the aim is simply to limit the exposure of personal data and, thus, the risks of leakage and attack. In contrast with anonymization, the market here is more mature (in particular, solutions have been developed in PCI-DSS contexts); but it doesn’t automatically follow that the same solutions represent the best choices for both anonymization and pseudonymization.

Encryption: an essential means of protection, but one that has its limits

Encryption is the reversible transformation of data—making it unreadable by applying an encryption function. The operation can be reversed only by using the encryption key, which is held by the relevant company or person. This greatly reduces the impact of data leakage.

Again, it should be noted that encryption is not anonymization! It can be reversed: key holders can access the data.

Encryption is promoted by GDPR

Encryption may mean that there is no need to report a data leak, when the leaked data is encrypted.[v]

Encryption itself is not mandatory, but it is considered a valid technique for securing data—and securing data is mandatory! [iii]

Encryption has been in widespread use for years and is standard in most data storage and transfer technologies. In an ideal world we’d see this practice extended to all environments: production and non-production, individual and shared storage spaces, etc. However, the impact of doing this is not negligible: in production, encryption has an impact on real-time flows because encryption-decryption operations take time and reduce performance; outside production environments, access to databases is, , granted to many different players (developers, testers, , etc.) who explore their contents directly and manually, something that makes encryption, as a general approach, difficult.

None of these techniques—anonymization, pseudonymization, or encryption—are imposed by the GDPR. They are, however, all suggested or recommended—but in specific cases, and to meet particular needs. Anonymization, which is irreversible, makes it possible to remove data from the GDPR’s scope, generally for the purposes of application testing or statistical analysis; to do this it must preserve the business significance and distribution of the data. In production, on-the-fly pseudonymization is used to limit data exposure to what is strictly necessary, according to the needs of the user who will access it: certain values are reversibly replaced by others that have no meaning. Lastly, the more widely used technique of encryption reversibly encrypts the values in a database, reducing the impact of any data leak because the data can be decrypted only by its key holders.

Protecting the data we hold is essential, but does that allow us to retain it indefinitely? It is commonly believed that the GDPR specifies a maximum time for data retention. This belief will be addressed in a third and final article in this series.

[i] Recital 26

[ii] An example of an attack for journalistic purposes: Oberhaus, D. (08/11/2017). “Votre historique de navigation privée n’est pas vraiment privé.” Available at: https://motherboard.vice.com/fr/article/wjj8e5/votre-historique-de-navigation-privee-nest-pas-vraiment-prive [FR], accessed 01/08/2018.

[iii] Recital 28

[iv] Article 32, Paragraph 1

[v] Article 34, Paragraph 3

[vi] Article 32, Paragraph 1